How Agentic AI Is Transforming SOC Investigations: Design Patterns from the Frontlines

A team of semi-autonomous AI Agents led by a supervisor agent can find root cause and make recommendations faster than your best analyst. Here's what the interaction actually looks like.

By Greg Nudelman | Originally published at UXforAI.com, adapted for SOCForge.ai

By all accounts, AI Agents are already here – they are just not evenly distributed. (1) However, few examples yet exist of what a good security operations experience of interacting with AI agents actually looks like. Fortunately, at the recent AWS re:Invent conference, I came upon an excellent example of what agentic SOC investigation might look like, and I am eager to share that vision with you in this article. But first, what exactly are AI Agents?

What are AI Agents?

Imagine an ant colony. In a typical ant colony, you have different specialties of ants: workers, soldiers, drones, queens, etc. Every ant in a colony has a different job – they operate independently yet as part of a cohesive whole. You can "hire" an individual ant (agent) to do some simple semi-autonomous job for you, which in itself is pretty cool. However, try to imagine that you can hire the entire ant hill to do something much more complex: figure out what's wrong with your system, investigate a security incident, or trace an anomaly across your entire infrastructure. Each ant on their own is not very smart – they are instead highly specialized to do a particular job. However, put together, different specialties of ants present a kind of "collective intelligence" that we associate with higher-order animals.

The most significant difference between "AI," as we've been using the term, and AI Agents is autonomy. You don't need to give an AI Agent precise instructions or wait for synchronized output – the entire interaction with a set of AI Agents is much more fluid and flexible, much like an ant hill would approach solving a problem.

How do AI Agents Work?

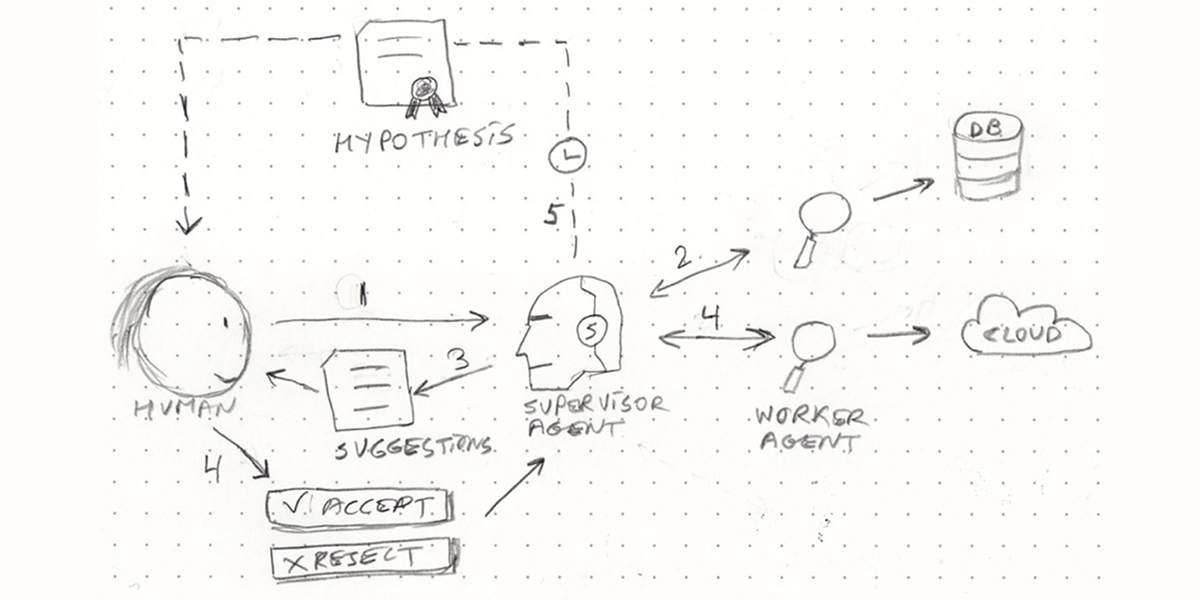

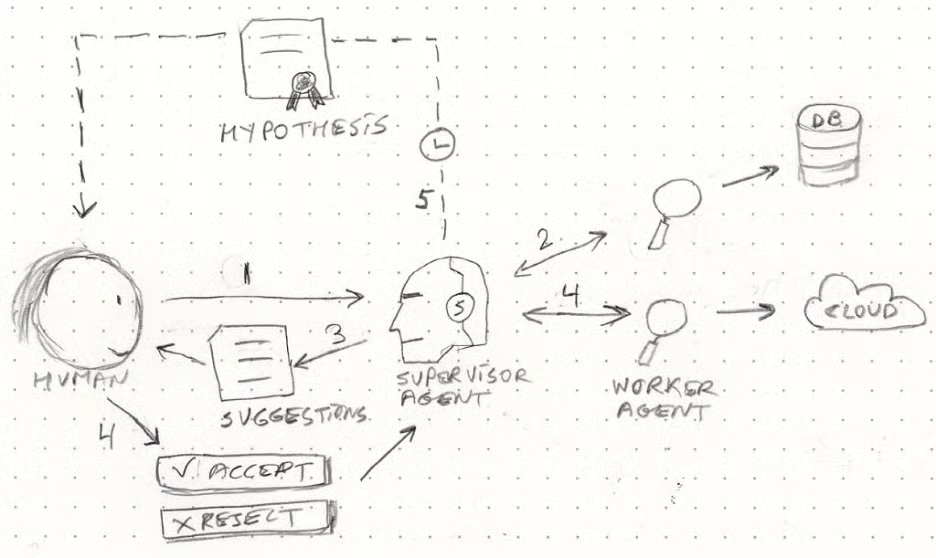

There are many different ways that Agentic AI might work – it's an extensive topic worthy of its own book. In this article, we will use an example of troubleshooting a problem on a system as an example of a complex flow involving a supervisor agent (also called "Reasoning Agent") and some Worker Agents. The flow starts when a human operator receives an alert about a problem. They launch an investigation, and a team of semi-autonomous AI Agents led by a supervisor agent help them find the root cause and make recommendations about how to fix the problem.

A multi-stage agentic workflow has the following steps:

- A human operator issues a general request to a Supervisor AI Agent.

- Supervisor AI Agent then spins up and issues general requests to several specialized semi-autonomous Worker AI Agents that start investigating various parts of the system, looking for the root cause (Database).

- Worker Agents bring back findings to the Supervisor Agent that collates them as Suggestions for the human operator.

- Human operator Accepts or Rejects various Suggestions, which causes the Supervisor Agent to spin up additional Workers to investigate (Cloud).

- After some time going back and forth, the Supervisor Agent produces a Hypothesis about the Root Cause and delivers it to the human operator.

Just like in the case of contracting a typical human organization, a supervisor AI agent has a team of specialized AI agents at their disposal. The supervisor can route a message to any of the AI worker agents under its supervision who will do the task and communicate back to the supervisor. The supervisor may choose to assign the task to a specific agent and send additional instructions at a later time when more information becomes available. Finally, when the task is complete, the output is communicated back to the user. A human operator then has the option to give feedback or additional tasks to the Supervising AI Agent, in which case the entire process begins again. (3)

The human does not need to worry about any of the internal stuff – all that is handled in a semi-autonomous manner by the supervisor. All the human does is state a general request, then review and react to the output of this agentic "organization." And much like in the ant colony, the individual specialized agent does not need to be particularly smart or to communicate with the human operator directly – they need only to be able to semi-autonomously solve the specialized task they are designed to perform and be able to pass precise output back to the supervisor agent, and nothing more. It is the job of the supervisor agent to do all of the reasoning and communication. This AI model is more efficient, cheaper, and highly practical for security operations.

Use Case: CloudWatch Investigation with AI Agents

For simplicity, we will follow the workflow diagram earlier in the article, with each step in the flow matching that in the diagram. This example comes from AWS re:Invent 2024 - Don't get stuck: How connected telemetry keeps you moving forward (COP322).

Step 1

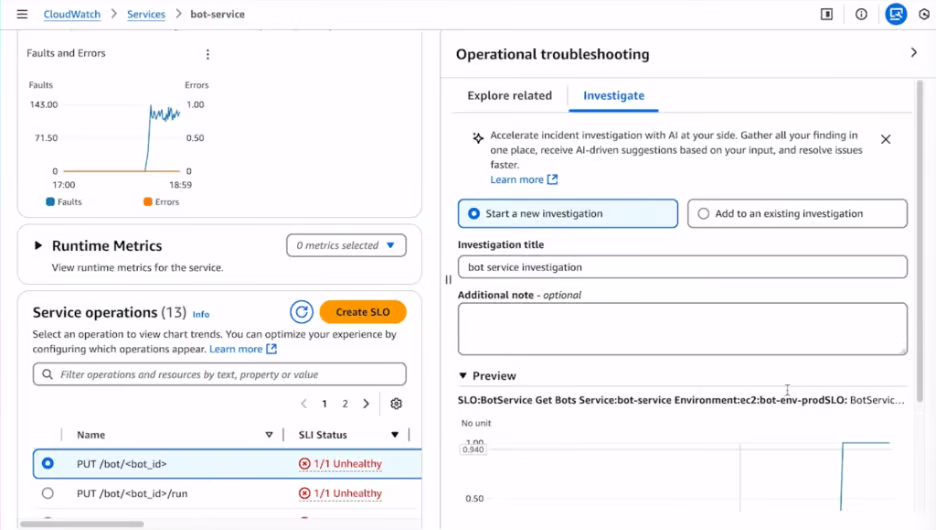

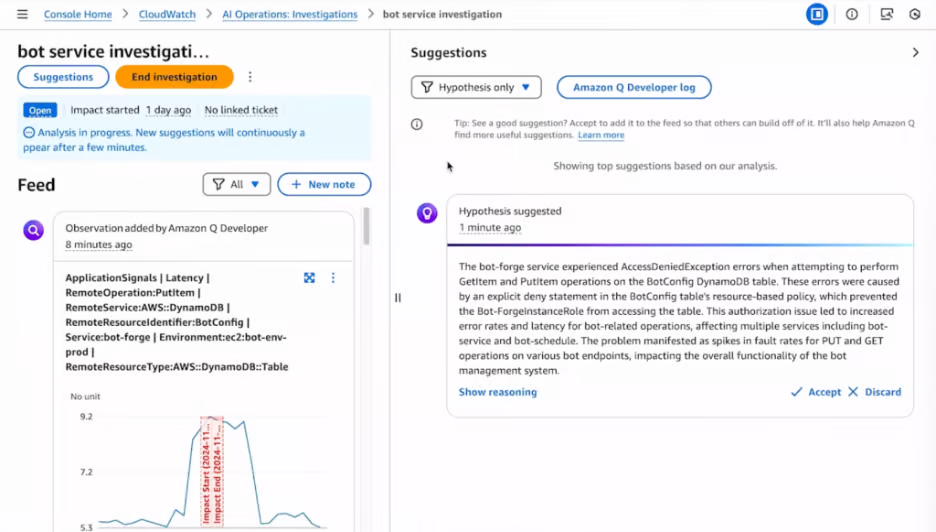

The process starts when the user finds a sharp increase in faults in a service called "bot-service" and launches a new investigation. The user then passes all of the pertinent information and perhaps some additional instructions to the Supervisor Agent.

Step 2

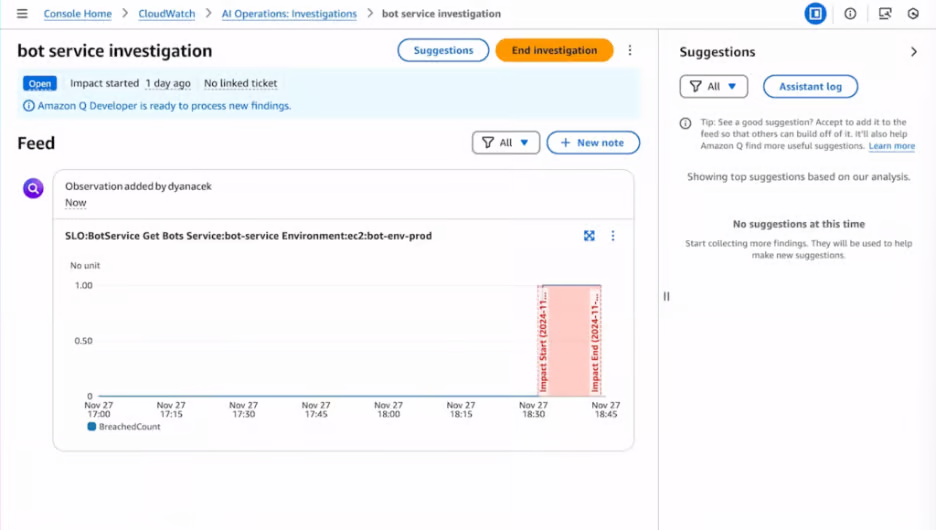

Now the Supervisor Agent receives the request and spawns a bunch of worker AI agents that will be semi-autonomously looking at different parts of the system. The process is asynchronous, meaning the initial state of suggestions on the right is empty: findings do not come immediately after the investigation is launched.

Step 3

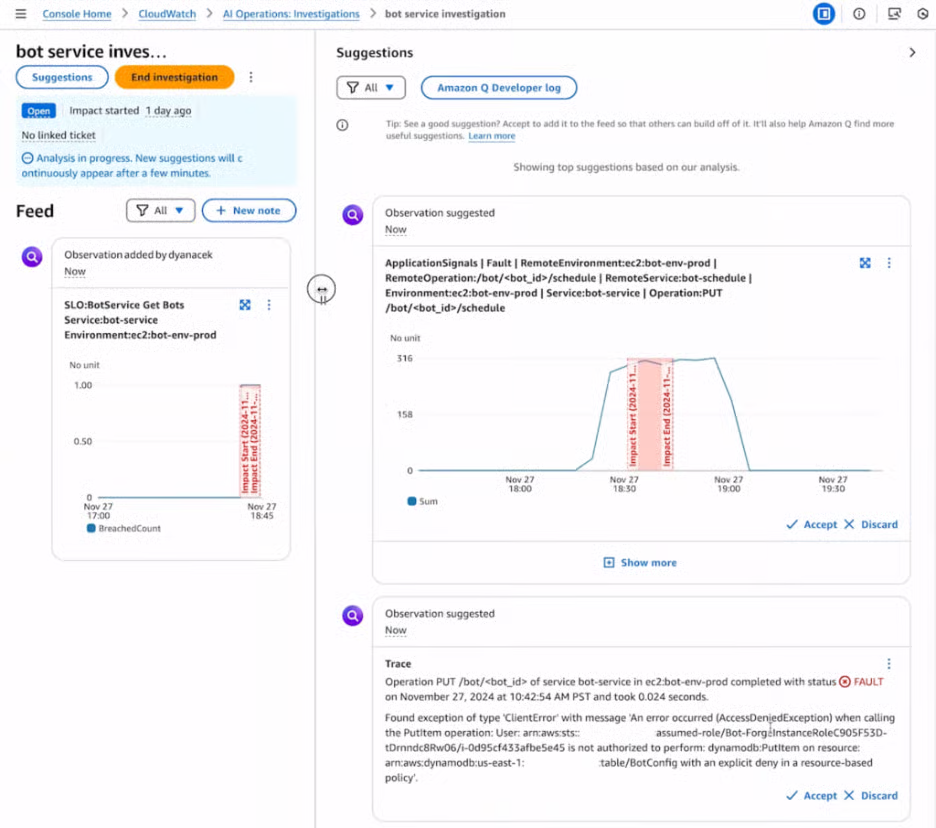

Now the worker agents come back with some "suggested observations" that are processed by the Supervisor and added to the Suggestions on the right side of the screen. Two very different observations are suggested by different agents, the first one specializing in the service metrics and the second one specializing in tracing.

These "suggested observations" form the "evidence" in the investigation that is targeted at finding the root cause of the problem. To figure out the root cause the human operator in this flow helps out: they respond back to the Supervisor agent to tell it which of these observations are most relevant. Thus the Supervisor agent and human work side by side to collaboratively figure out the root cause of the problem.

Step 4

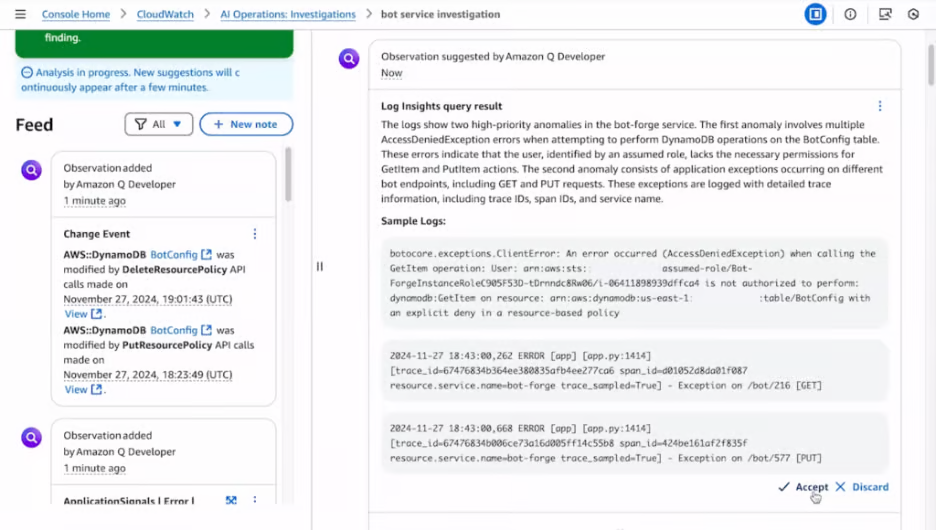

The human operator responds by clicking "Accept" on the observations they find relevant, and those are added to the investigation "case file" on the left side of the screen. Now that the humans have added some feedback to indicate the information they find relevant, the agentic process kicks in the next phase of the investigation. The supervisor agent will stop sending "more of the same" but instead will dig deeper and perhaps investigate a different aspect of the system as they search for the root cause.

Step 5

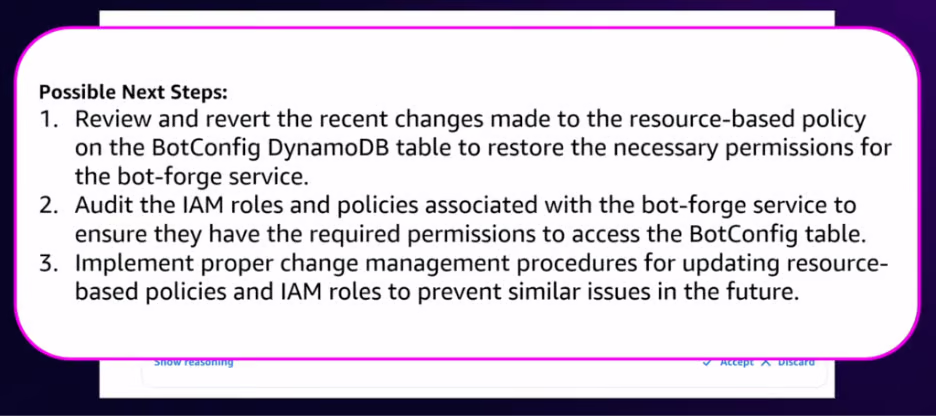

Finally, the Supervisor Agent has enough information to take a stab at identifying the root cause of the problem. Hence, it switches from evidence gathering to reasoning about the root cause. Like a literary detective, the Supervisor Agent delivers its "Hypothesis suggestion."

The suggested hypothesis is correct, and when the user clicks "accept" the Supervisor agent helpfully provides the next steps to fix the problem and prevent future issues of a similar nature.

Why This Matters for SOC Teams

At Sumo Logic, I architected exactly this kind of 4-agent autonomous security investigation platform. The results:

- Investigation time dropped from 60 minutes to under 3 minutes

- $21M ARR saved through reduced analyst workload

- Forrester rated the platform at 166% ROI

- Featured at AWS re:Invent 2025

The pattern described above isn't theoretical. It's in production. And it's the future of how SOC teams will work.

Key Takeaways for Security Operations

Flexible, adjustable UI: Agents work alongside humans. AI Agents require a flexible workflow that supports continuous interactions between humans and machines across multiple stages – starting investigation, accepting evidence, forming a hypothesis, providing next steps. It's a flexible looping flow crossing multiple iterations.

Autonomy: While human-in-the-loop is the norm for agentic security workflows, agents show remarkable abilities to come up with hypotheses, gather evidence, and iterate until they solve the problem. They do not get tired or run out of options and give up. However, this "aggressive" exploration requires new approval flows — an AI agent that autonomously takes remediation action without analyst consent is a recipe for disaster. (4)

New controls are required: Most agent actions are asynchronous, which means traditional transactional, synchronous request/response models are a poor match. Start, stop, and pause buttons are essential for controlling the agentic flow — otherwise you end up with the "Sorcerer's Apprentice" situation: self-replicating agents investigating without stopping, creating an expensive mess.

You "hire" AI to perform a task: These are no longer tools, they are reasoning entities. AI service already consists of multiple specialized agents monitored by a Supervisor. Very soon, we will introduce multiple levels of management with sub-supervisors and "team leads." That means developing Role-Based Access Control (RBAC) and agent versioning. Safeguarding the agentic data is going to be even more important than signing NDAs with your human staff.

Continuously Learning Systems: Agents learn, quickly becoming experts in whatever systems they work with. The initial agent, just like a new SOC analyst on day one, will know very little, but they will quickly become the "adult in the room" with more access and more experience than most humans. This will create a massive shift in how security operations centers function.

Regardless of how you feel about AI Agents, it is clear that they are here to stay and evolve alongside their human counterparts. The question for SOC teams isn't whether to adopt agentic AI — it's how to design systems that allow analysts and agents to work together safely and productively.

Greg Nudelman. 16+ years shipping 34 AI products with $500M+ impact for Cisco, Intuit, IBM, GE, eBay, Sumo Logic, LogicMonitor. 24 patents. 6 books, including "UX for AI" (#1 Amazon New Release). 120 keynotes across 18 countries. Built an autonomous agentic SOC investigation platform in 2025, Forrester-rated at 166% ROI. Shipping AI security and observability tools SOC teams actually use.

References

1. Altman, Sam. Reflections. samaltman.com. January 5, 2025. https://blog.samaltman.com/reflections Collected Jan 21, 2025.

2. AWS re:Invent 2024 - Don't get stuck: How connected telemetry keeps you moving forward (COP322). AWS Events on YouTube.com. Dec 7, 2024. https://www.youtube.com/watch?v=ad42UTjP7ds Collected Jan 21, 2025

3. Kartha, Vijaykumar. Hierarchical AI Agents: Create a Supervisor AI Agent Using LangChain. Medium.com May 2, 2024.

https://vijaykumarkartha.medium.com/hierarchical-ai-agents-create-a-supervisor-ai-agent-using-langchain-315abbbd4133 Collected Jan 21, 2025

4. Mollick, Ethan. When you give a Claude a mouse. oneusefulthing.org. Oct 22, 2024 https://www.oneusefulthing.org/p/when-you-give-a-claude-a-mouse Collected Jan 21, 2025