The Easiest Way to Eat Your Security Data Salami (and Enjoy It)

... Is to slice it thin. Here's how.

By Greg Nudelman

A security team decides to build an AI-powered investigation platform. They identify 20 use cases. They get excited. They try to build for all 20 at once — one big prompt, one massive RAG, one vector database, everything mashed together.

Nine months later: no working product. Three dev teams burned through. Architecture debates that never end. And a board asking uncomfortable questions about where the money went.

They tried to eat the whole salami in one sitting. No knife. Just a room full of people staring at an intimidating stick of cured meat, not sure where to start.

Here's the thing about salami: it's not meant to be eaten whole. It's meant to be sliced. Thin. One piece at a time. You taste it. You decide if you like it. You have another slice. Then another. Before you know it, the whole stick is gone — and you actually enjoyed it.

Even better: invite your friends to the party. Everyone eats a few slices. You serve some Châteauneuf-du-Pape. Now you might need a second salami just to keep up. That's a good problem to have.

This is exactly how production security AI products get built. And I'm going to show you the exact pattern — using a real rule generation system built and shipped at a mid-sized cybersecurity company.

Round 1: One Thin Slice

The system was an AI-powered rule generation engine for security detection. The SIEM had over 1,000 detection rules across dozens of data sources — CloudTrail, GuardDuty, Route 53, Azure, GCP, and on and on. Writing and maintaining these rules by hand was slow, error-prone, and couldn't keep up with the evolving threat landscape.

Most teams would try to tackle all 1,000 rules at once. Boil the ocean. Eat the whole salami.

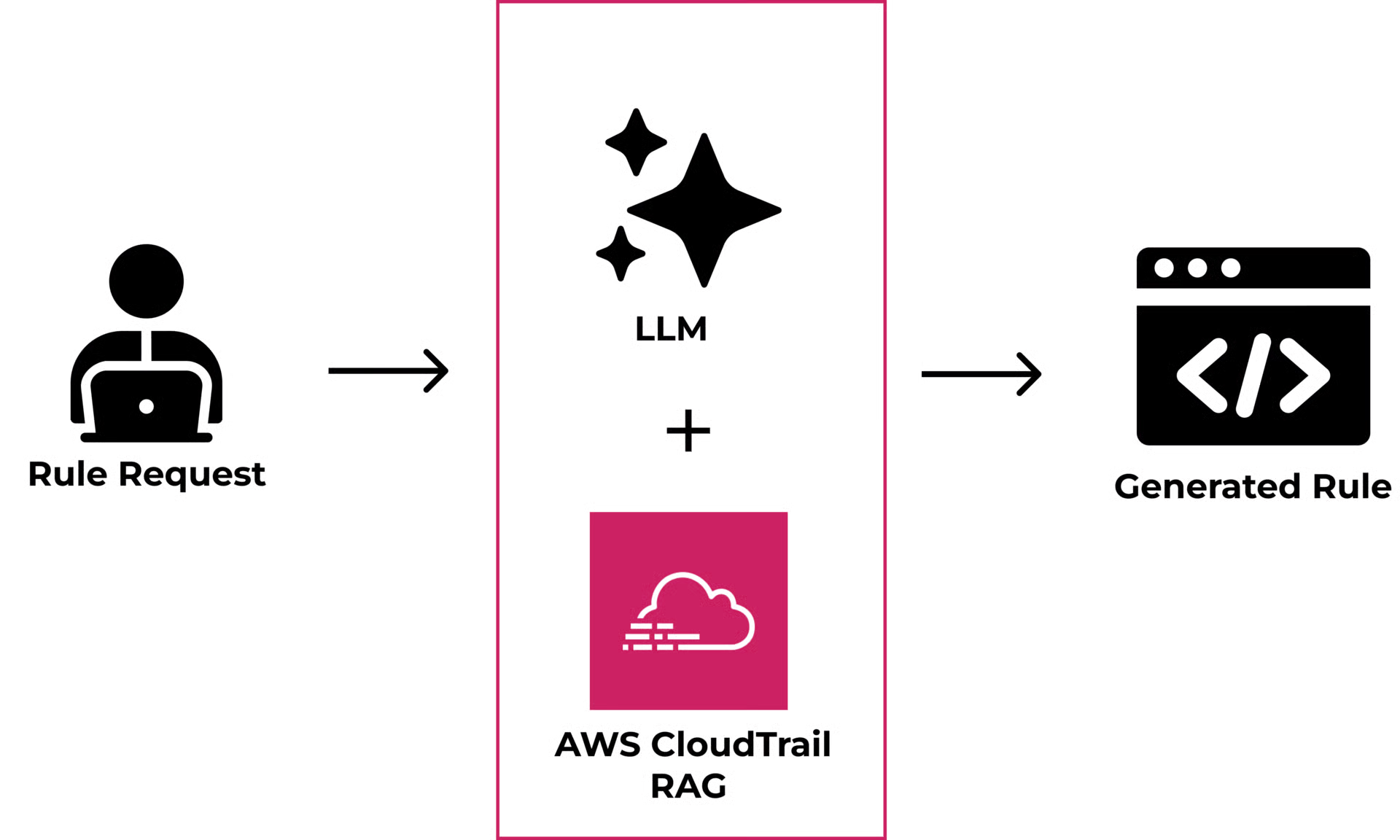

The right move: pick one data source. AWS CloudTrail. Just CloudTrail. About 15 detection rules. Tiny. Fits in the Claude context window without any changes — and that's the whole point.

15 RAG files taught the LLM how CloudTrail rules were structured — the detection logic, query syntax, variables, errors, and output format. Small enough to manually inspect every single generated rule. Small enough to test every output by hand. Small enough to optimize the living hell out of it in an afternoon.

One data source. 15 RAGs. 15 rules.

We validated it internally, then put the generator in front of security engineers and told them: this only knows CloudTrail. Nothing else. It won't generate rules for anything else.

No UI. Just LLM + RAGs.

Their response: "Oh my god, these rules are good. This will save me so much time. When can I have this? How much does it cost?"

That's how you know the salami tastes good. One thin slice. And they wanted more.

But here's what changed the architecture: what the analysts said next. They didn't ask for more data sources crammed into the same system. They said, "Can you make one that does this for GuardDuty? And one for Route 53?" They wanted more specialists, not a bigger generalist. That single insight — analysts think in data domains, not in unified platforms — shaped every architectural decision that followed.

Round 2: The Router

Now here's where most teams make a critical mistake.

They say, "Great, CloudTrail works! Let's add more!" and they dump everything into the same RAG system. GuardDuty rule structures, Route 53 formats, Azure alert schemas, GCP conventions — all mashed together in one RAG collection.

You have just set up your simple POC for failure in the SOC.

You're trying to optimize disparate things that were never meant to work together. Now you're struggling with weird hallucinations. Latency. Endless vector database infrastructure debates. Managing the context window. Nine months of whack-a-mole. You took your beautifully sliced salami and shoved all the pieces back into the casing.

Instead, the right approach is a second thin slice. A RAG tuned specifically for GuardDuty rule generation. Optimized independently, on its own terms.

Now you have two slices. Both generate high-quality rules on their own. The question becomes: how does the system know which RAG to use?

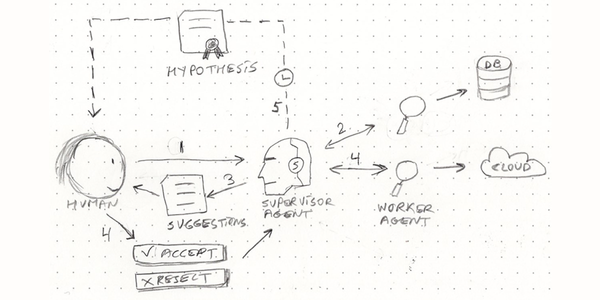

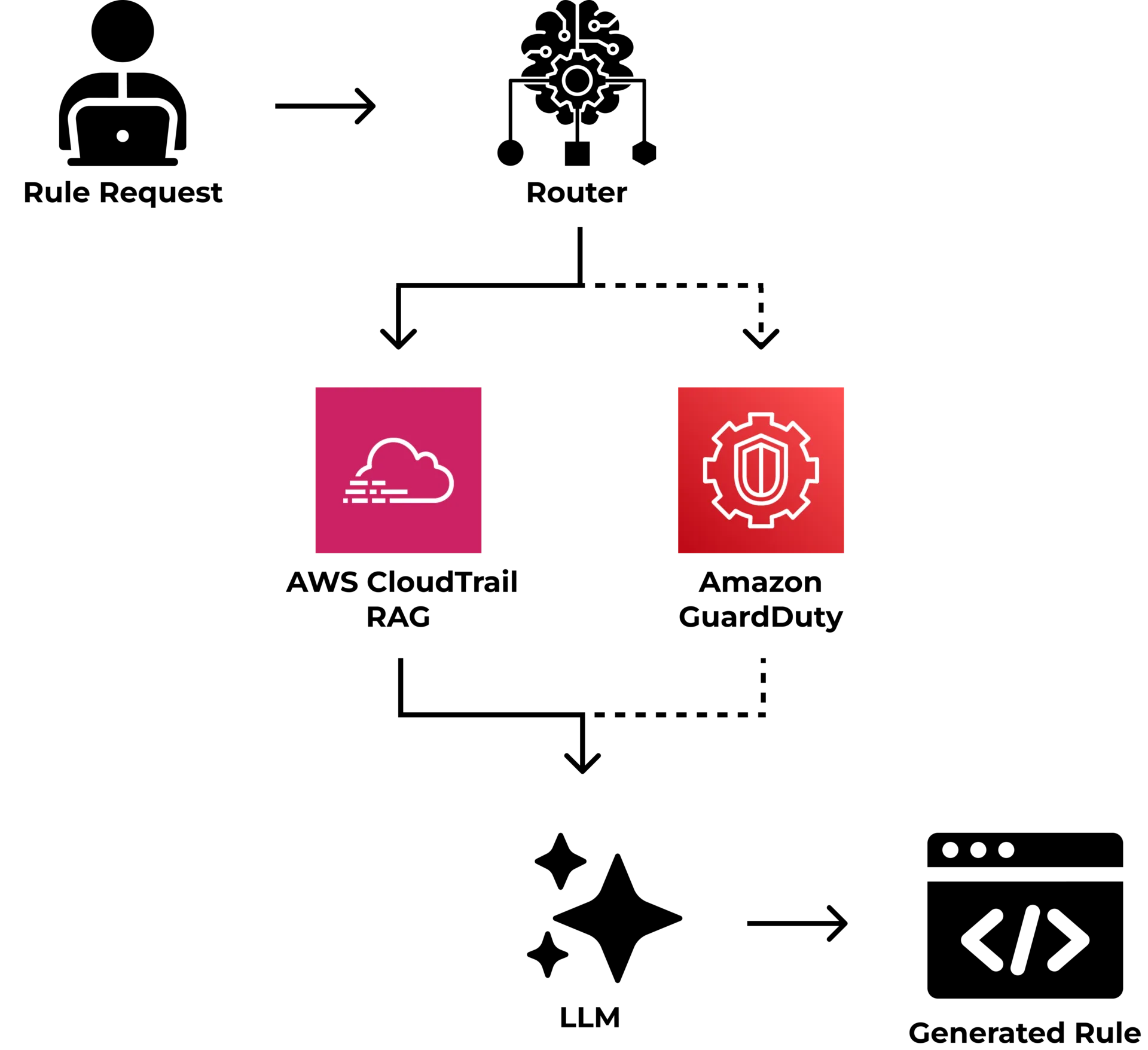

Add a router.

The router's job is dead simple: figure out the data category. The router reads the request and sends it to the specialist RAG set, which has already been tuned to answer that exact question.

No ambiguity. No judgment calls. Clean boundaries.

Why the Router Is the Whole Game

Without a router, you have one monolithic system trying to generate rules for every data source at once. That's the whole salami with no knife.

With a router, each LLM+RAG set is a specialist. It only knows how to work with one data source, but it knows that data source deeply. One is the world's expert in CloudTrail. Another has a PhD in GuardDuty. Hallucination drops dramatically because you're not asking the LLM to hold 20 different rule formats in its head at once. You're routing to an AI that is an expert in generating just one type of rule, using a RAG specifically tuned for that one data set.

This is why "works in POC" and "works in SOC" are two completely different things. The POC handles CloudTrail in isolation. Production means routing across CloudTrail, GuardDuty, Route 53, Azure AD, GCP — all hitting at once, all needing different query syntax, different schema structures, different detection logic. The router is what makes that work without the system collapsing under its own weight.

And it scales almost infinitely.

Give 10 teams 10 different data sources each. Now you have 100 specialized RAGs, all routing through the same classifier. The router doesn't care if there are 2 RAGs behind it or 200. As long as it can classify the data source — which is trivial, because the request usually tells you what it is — it routes to the right specialist RAG every time.

You scale horizontally. By adding delicious, easy-to-eat thin slices, not by enrolling your team in the Whole Salami Eating Competitions.

The Registry: How 15 RAGs Became 1,000 Rules

Once CloudTrail was dialed in — really working, customers buying before the demo was over — something changed the trajectory of the whole project.

The common patterns got extracted into a RAG Registry.

Every RAG file built for CloudTrail had a shared structure: how rules were formatted, how severity was classified, how the detection logic was expressed, and how the output was structured. Those common pieces got pulled out into a reusable template — a registry that captured what "good" looked like.

Then the LLM got pointed at the registry, given a few sample rules, and prompted: "Here's what a great CloudTrail RAG looks like. Now build me one for GuardDuty."

It did. And it was 80% right on the first pass. A few hours of tuning and it was production-ready.

Then Route 53. Then Azure. Then GCP.

15 RAGs became 1,000 rules in 2 weeks. And a beta AI product in just 6 weeks.

Not because anyone worked 20-hour days. Because the registry taught the LLM what quality looked like, and each new RAG was just another slice off the same salami. Same pattern. Same structure. Different data source. Different query language. Different schema. Same knife.

The snowball was rolling.

Same Knife, Different Security Data

This pattern works anywhere the system can cleanly identify an atomic use case and route to a specialist. In security, the boundaries are everywhere.

Alert triage is the obvious next target. Your SIEM generates alerts from dozens of sources — each with its own format, severity model, and investigation workflow. A Sigma rule firing on a Windows endpoint log looks nothing like a cloud-native GuardDuty finding. But the triage pattern is the same: classify the source, route to the specialist RAG that knows that source's schema and investigation playbook, generate the analysis.

Incident investigation works the same way. An analyst investigating lateral movement across Azure AD needs different context, different queries, different enrichment than one investigating data exfiltration through S3 buckets. One monolithic investigation RAG tries to know everything and knows nothing well.

Take the lateral movement case. A specialized RAG for that investigation type contains the Azure AD sign-in schema, the KQL queries that surface impossible-travel anomalies and token replay patterns, the enrichment sequence (resolve the user, pull their recent sign-in history, check for new MFA device registrations, correlate with endpoint telemetry for the same timeframe), and the decision tree an analyst actually follows — is this a VPN artifact or a compromised session token? Each of those steps has a specific data format and a specific query language. A generalist RAG that also knows about S3 bucket policies and CloudTrail API calls will hallucinate the KQL syntax half the time. A specialist RAG nails it because that's all it knows.

Specialized RAGs — one per data domain, one per investigation type — mirror how analysts actually think. Network is network. Endpoint is endpoint. Cloud is cloud. Identity is identity. The router does what the analyst's brain already does: identify the data domain first, then go deep.

Threat intel ingestion, vulnerability prioritization, playbook generation — the pattern repeats. Every domain in your SOC where the data has distinct structure and distinct workflows is a candidate for thin slicing.

You don't always need a classifier-based router either. Sometimes good information architecture does the routing. When an analyst clicks into a CloudTrail investigation, the system already knows which RAG to load. The analyst did the routing with a single click. Don't over-engineer something you can solve with good investigation experience design.

The Pattern

Slice thin. Build the RAG. Add the router. Extract the registry. Invite friends to the party.

That's how 15 RAGs become 1,000 rules in 2 weeks. And a beta AI product launch in 6 weeks.

Every team I've seen fail at security AI failed the same way: they tried to build the whole thing at once. Every team I've seen ship built one thin slice that worked, then scaled it with a router. The architecture isn't complicated. Having the discipline to start small and stay small until the slice is perfect — that's the hard part. And it's the only part that matters.

FAQ

"If this is standard, why don't more teams ship with it?"

It takes work. Not the "let me fine-tune a model" kind of work that AI engineers love to do — the "let me sit with a security engineer for three hours and understand exactly how they triage a CloudTrail alert" kind of work. Breaking things into thin slices requires deep understanding of how analysts actually investigate. And it takes translating that understanding into a technical architecture that mirrors their workflow.

That analyst workflow validation — riding along with Tier 1 and Tier 2 analysts, watching how they actually move through an investigation, mapping the decision points — is what separates AI that ships from AI that demos well. 85% of enterprise AI projects fail, and most of them fail here: they build technically impressive systems that don't match how analysts actually work.

"How does the router actually work?"

Most of the time, the classifier is simply another LLM specifically set up to evaluate the request. It has its own RAG file, but it's much more specialized — only focused on returning the data source classification. You can prototype one in about five minutes, but it may take a few hours to really get the routing accuracy you need in production. Which is exactly why Round 1 has no router at all. And no UI. It's a much simpler system that assumes a single data source, a single use case. Get the AI output right first. Add the router when you need it.

"Is this a proprietary technique?"

Not even close. The idea of routing inputs to specialized experts dates back to Jacobs, Jordan, Nowlan & Hinton's 1991 paper on adaptive mixtures of local experts. Google Brain scaled it in Shazeer et al.'s 2017 sparsely-gated mixture-of-experts work — the foundation of every modern MoE architecture. RAG itself was published by Lewis et al. at NeurIPS in 2020. What matters isn't inventing the patterns. It's combining them into a production architecture that ships product — and having the operational discipline to sit with analysts long enough to know where the slice boundaries actually are. The papers give you the theory. Production gives you the scars.

Greg Nudelman — 16+ years shipping 34 AI products with $500M+ impact. Built an autonomous agentic SOC investigation platform in 2025 (Forrester-rated 166% ROI, reduced investigation time from 60 minutes to under 3 minutes, $21M ARR saved). Featured at AWS re:Invent 2025. Shipping AI security and observability tools SOC teams actually use.